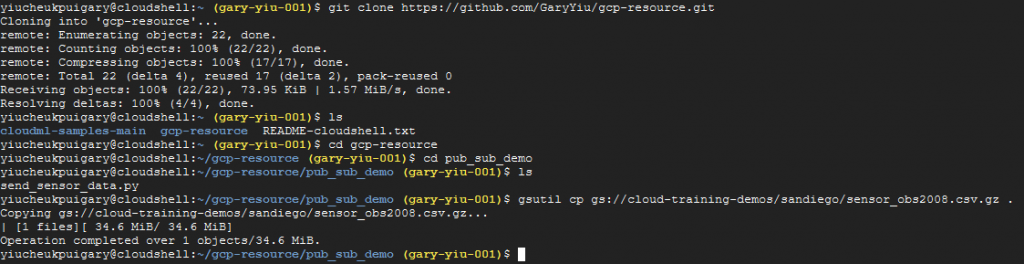

In order to create a streaming data pipeline in Google Cloud Platform, we are going to make use of Pub/Sub. We are going to simulate a streaming data source with Pub/Sub and load the data into BigQuery for analysis. Let’s start using GCP cloud shell.

Prerequisites:

- Clone script file from Github

git clone https://github.com/GaryYiu/gcp-resource.git

cd ~/gcp-resource/pub_sub_demo- Download sample data for streaming

gsutil cp gs://cloud-training-demos/sandiego/sensor_obs2008.csv.gz .

1. Create Pub/sub topic

First we need to create a Pub/sub topic. We are going to use Cloud shell to create this.

gcloud pubsub topics create sandiego2. Create a subscription to the topic

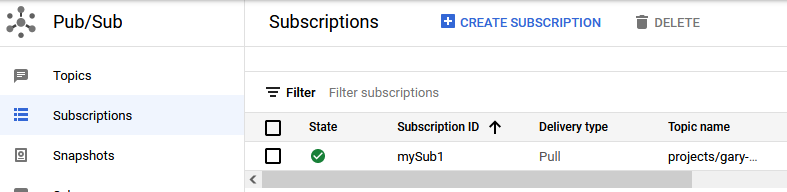

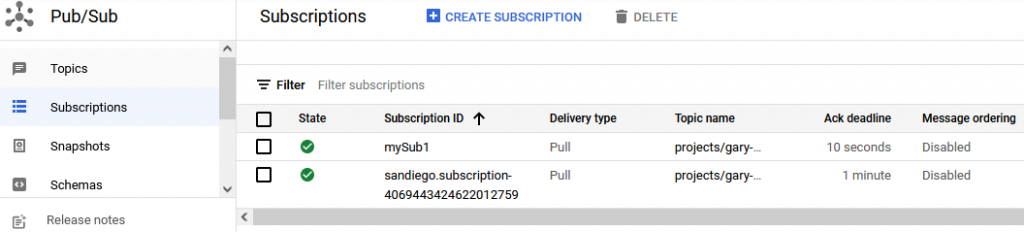

Then we also need to create a subscription so we can receive data from the topic

gcloud pubsub subscriptions create --topic sandiego mySub1We can check in the Pub/sub console view to verify that the topic and the subscription both exist.

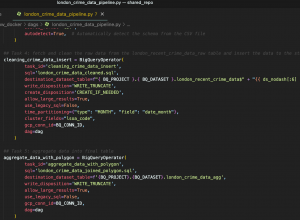

3. Create the BigQuery table to store the streaming data

bq mk --dataset $DEVSHELL_PROJECT_ID:demos4. Create bucket for Dataflow staging

Dataflow requires a staging ground to store temporary data before loading into BigQuery. So let’s create a GCS bucket for that.

gsutil mb gs://$DEVSHELL_PROJECT_ID5. Run Dataflow job to subscribe to the topic

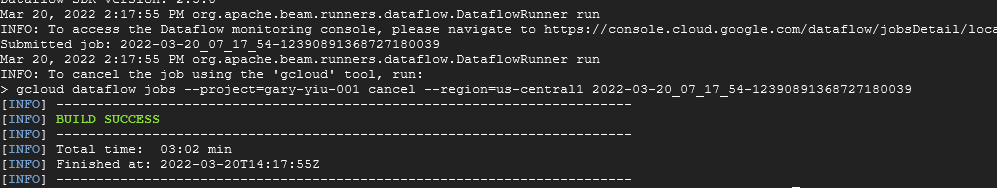

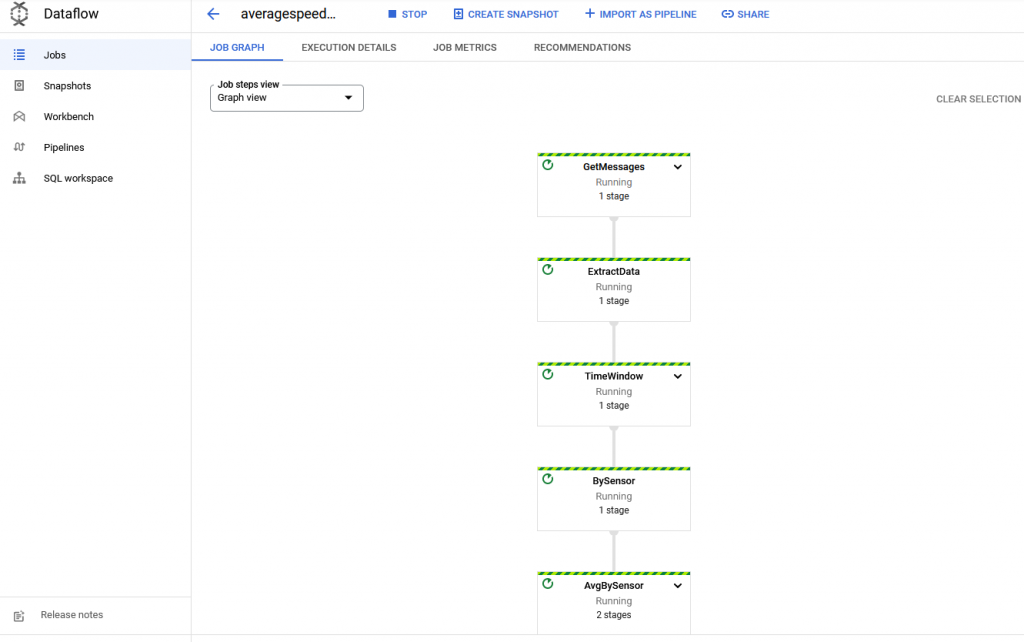

To run a Dataflow job, we are using a script to do that. Before running it, set the permission of the file to prevent any permission denial. After the build is complete, we can see success message showing in cloud shell.

sudo chmod +x run_oncloud.sh

./run_oncloud.sh $DEVSHELL_PROJECT_ID $DEVSHELL_PROJECT_ID AverageSpeeds

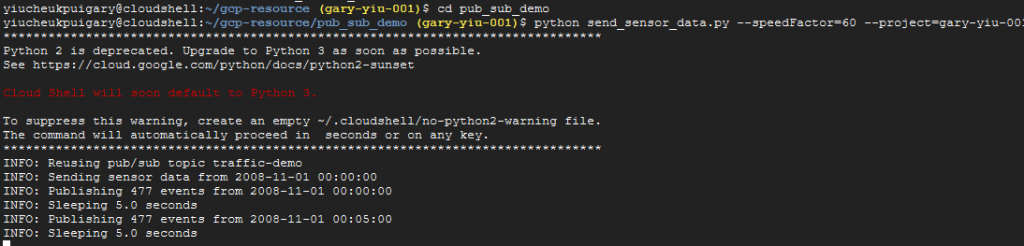

6. Start the streaming data simulation

Now we can start to stream our data from the topic to the subscription by running the simulation script that will stream data every 5 seconds to our topics. Then the Dataflow subscription will pull the data from the topic

python send_sensor_data.py --speedFactor=60 --project=gary-yiu-001

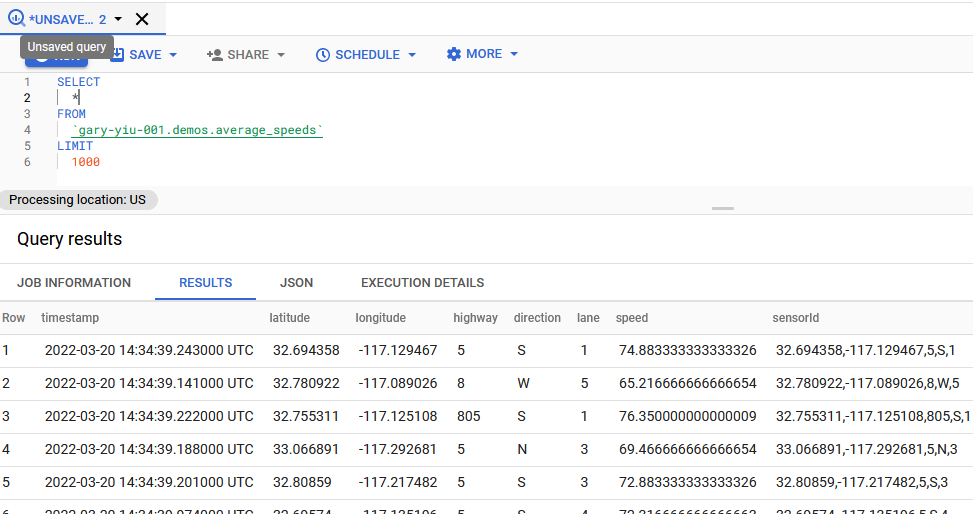

7. View data in BigQuery

SELECT * FROM `gary-yiu-001.demos.average_speeds` LIMIT 1000

We successfully created our streaming data pipeline from Pub/sub to Dataflow to Bigquery. Now let’s wrap up by terminating our topics, subscriptions and Dataflow job.